Artificial Lifeforms

As real-world computers and machinery continue to become more complex and more powerful, it is not far-fetched to assume that they may become self-aware and maybe sentient. This would ultimately require to grant them a status of lifeforms. But where do we have to draw a line? Where does Star Trek draw the line? And has this line shifted since the 1960s?

Analysis

TOS

Although computers were commonplace, Star Trek needed some time to discover their storytelling potential. The computer of the Enterprise was depicted as nothing more than a tool that controlled the systems of the ship, that served to access databanks and to perform scientific calculations. The "female personality" the computer temporarily had in "Tomorrow is Yesterday" (after a maintenance on a planet dominated by women) was more like a sexist joke than anything meant to be taken seriously.Androids too showed up as early as in the first season of TOS, in "What are Little Girls Made of?". The question whether androids are the better humans, whether they should rule over humans and ultimately replace them cropped up in the episode. Yet, it remained without further story relevance. As if to reaffirm the disinterest in this issue, android bodies were constructed as receptacles for Sargon and his people in "Return to Tomorrow" but never activated.

Generally, even highly advanced alien androids, robots and computers were commonly just regarded as machines at the time of TOS. No one truly posed the question whether they should be classified as sentient or as lifeforms. The word "sentient" was hardly ever spoken out up during TOS anyway.

While the crew used to hunt down strange but supposedly "natural" lifeforms relentlessly more than once, such as in "Operation - Annihilate!", "Obsession" or "The Immunity Syndrome", there are other occasions on which sentient beings were saved in the spirit of "seeking out new life", such as in "The Devil in the Dark" or "Metamorphosis". A machine, on the other hand, was at most conceded the attribute of being intelligent at the time of TOS. Although there was never a hint that androids existed in the Federation or in Starfleet (unlike in the two reboots of this era, namely the Abramsverse and Discovery), the impression is that anyone like Data would have been denied their rights of individuality in the 23rd century.Even more drastically, androids and machines routinely had to be destroyed or "discussed to death", like it happened with Landru in "Return of the Archons", the war simulation computer in "A Taste of Armageddon", the planet killer in "The Doomsday Machine", Nomad in "The Changeling", Vaal in "The Apple", the androids in "I, Mudd" or M-5 in "The Ultimate Computer". No one really felt sorry about the loss. On the contrary, their superior intelligence was rated as dangerous for humanity and their destruction celebrated as a liberation. Much like with genetically enhanced humans, there was something intrinsically villainous about autonomous computers and androids. They were either programmed to be "evil" or they ran out of control because of accidents, giving themselves the goal to rule over biological lifeforms or to exterminate them like in TOS: "What are Little Girls Made of?", "The Ultimate Computer" or "I, Mudd". And even the few advanced machines that functioned as intended had something eerie about them, such as M-4 from TOS: "Requiem for Methuselah" (which may have something to do with the fact that the prop was a re-use of Nomad from "The Changeling").

Rayna, the female android in TOS: "Requiem for Methuselah", marks a notable exception. She was the first artificial lifeform in Star Trek to clearly exhibit not only sentience but obviously feelings too. And it was the first time that this was acknowledged by the ship's crew, especially considering that Kirk fell in love with her. Rayna eventually "died" when she could not cope with her conflicting feelings (for Kirk and for Flint). While this outcome is still reminiscent of the computers that Kirk argued to death because they couldn't process contradictory commands or information, Rayna's tragic end also bears traits of more or less illogical self-destructive tendencies of humans, of the kind that machines are just not supposed to exhibit. But Rayna's existence probably remained isolated anyway, because Flint died soon afterwards and may not have built other androids of her kind.Another example of an artificial lifeform that is shown in a positive light is V'ger from "Star Trek: The Motion Picture", even though V'ger destroys anything in its flight path (apparently not knowing what killing a lifeform actually means in an ethical sense). In any case V'ger's quest for its creator is one more characteristic that is typical of lifeforms, not of machines.

TNG, DS9 and Voyager

The turning point in the question of the rights of artificial lifeforms in Star Trek was Data. It was obvious from the first episode that he was meant to be intelligent and probably sentient, although he himself had to admit that he was unable to experience emotions. He usually missed the point of fundamental human(oid) feelings and also of humor, like in his fruitless efforts to be funny (TNG: "Unnatural Selection"). Still, he was a fully privileged graduate of Starfleet Academy, not an honorary Lt. Commander, as Riker suspected in TNG: "Encounter at Farpoint". Data must have been admitted to Starfleet Academy like any other cadet, and this would necessarily require that he was acknowledged as a sentient being already back then - unless Starfleet would want an army of mindless robots instead of (self-)responsible officers.In consideration of Data's career it is only odd why in TNG: "The Measure of a Man" Commander Maddox could simply demand Data to be disassembled because, as he claimed, Data was Starfleet's property. Essentially the same happened once again when Admiral Haftel demanded Lal's extradition with much the same justification in TNG: "The Offspring". On a related note, even if Data was not regarded as a person but as a thing, how could Starfleet claim ownership on him, when he legally belonged to Dr. Noonien Soong? It is only possible that some later jurisdiction may have been used to override Data's entry into Starfleet. In any case, it should have been decided much earlier than after years in Starfleet whether he was alive or not. And Data should have made sure, before building the android, that no one could claim ownership on Lal.

The (preliminary) approval that Data is (or could be) a sentient and self-responsibility lifeform may have facilitated and accelerated the process of recognizing the rights of other artificial lifeforms, such as the nanites in TNG: "Evolution" and the Exocomps in TNG: "The Quality of Life" (although in these two cases there are no legal proceedings that we know of).

Voyager's EMH too was struggling for acceptance in the first season of the series, until he was granted the right to decide for himself when to go offline. Much like Data in "The Measure of a Man", the EMH set a precedence for holograms to be recognized as individuals with individual rights in VOY: "Author, Author". And while I think the idea of medical holograms working in a mine as seen in "Author, Author" should be taken with a grain of salt, acknowledging the rights of new lifeforms is an ongoing process in the Federation, and there is no foreseeable end to it.

Furthermore, in some cases it may have severe consequences for human beings if they had to respect the rights of evolving artificial life, possibly at the expense of their security or even their own lives. The nanite incident in TNG: "Evolution" almost turned into a disaster, and we may only speculate what would have happened if the Enterprise-D had chosen a less moderate way to procreate in TNG: "Emergence". Like already V'ger, the evolving artificial intelligences of TNG seem to have forgotten or seem to ignore the respect for biological lifeforms, although something like "ethical subroutines" (based on Asimov's Laws of Robotics) must have belonged to their original programming.

Even though it is shown in an overall positive light this time (as "evolution" is unequivocally deemed a worthwhile process in Star Trek), there is still something incalculable about artificial life, like a remainder of the villainous machines of TOS. Even Data himself occasionally runs amuck, notably in "Brothers" and in "Insurrection". While we should not forget that human beings are overall much more "fault-prone", the severity of machine faults is much higher, especially when fail-safe mechanisms fail or are overridden. But seeing that no one would generally mistrust holodecks either, although these fail quite often (actually much too often to be still considered safe), the possible fear of androids and other artificial lifeforms should not be a reason for the Federation to impede their development.

Discovery

In "The Vulcan Hello" and "Battle at the Binary Stars", the USS Shenzhou has an apparently robotic crew member whose nature remains unexplained. Much of the second season of Star Trek: Discovery is governed by the fight against a power artificial intelligence named "Control" that was created by Section 31. The question whether Control is a lifeform that deserves to be protected as such is never posed, considering that the AI, in a future stage of its evolution, is predicted to eradicate all organic life in the galaxy. The whole conflict and the very existence of Control is eventually swept under the rug in "Such Sweet Sorrow II".

In "The Vulcan Hello" and "Battle at the Binary Stars", the USS Shenzhou has an apparently robotic crew member whose nature remains unexplained. Much of the second season of Star Trek: Discovery is governed by the fight against a power artificial intelligence named "Control" that was created by Section 31. The question whether Control is a lifeform that deserves to be protected as such is never posed, considering that the AI, in a future stage of its evolution, is predicted to eradicate all organic life in the galaxy. The whole conflict and the very existence of Control is eventually swept under the rug in "Such Sweet Sorrow II".

Picard season 1

Androids have become commonplace in the Federation in the 2380s. But after Commander Data's death and the failure to keep B-4 active, androids are more like simple-minded machines that perform routine tasks, such as the android workers on Mars in PIC: "Maps and Symbols". It also becomes obvious that these "Synths" are not accepted as lifeforms by the humanoid colleagues they are supposed to interact with. The open disdain for the "plastic people" is part of the story and part of the overall concept in Star Trek: Picard that selfishness and fear governs the leaders as well as the common people. When the Synths attack and destroy the facilities on Mars, ostensibly as a "rebellion of the machines" but actually under the control of Commodore Oh, this already existing sentiment seems to have bolstered the decision to ban Synths once and for all. We do not know the exact reason, but Synths were probably deemed uncontrollable and therefore dangerous.Most likely all existing Synths were not just deactivated but destroyed. A clear case of genocide, had they already been acknowledged as lifeforms. Research on synthetic lifeforms was banned, and scientists such as Bruce Maddox or Altan Soong had to work on their projects in secret places.

It is interesting to note, however, that the ban didn't include holograms. We could see a hologram called Index in PIC: "Remembrace", and Rios has no less than five emergency holograms on La Sirena. Considering that the capabilities of holograms are at least equal to those of androids, it is not clear why only the latter were outlawed. A possible rationale is that androids are usually completely autonomous. Data's secret off-switch was the only interface the android couldn't control himself. Everything else he said and did was his very own decision. This is somewhat different with holograms that always need a control and a projection unit. The interface is always accessible (well, unless there is a holodeck failure...) and the hologram can be shut down in case of a malfunction. Also, banning holographic helpers would ultimately mean shutting down all holodecks and holographic interfaces that come with varying degrees of autonomous functions.

Soong and Maddox secretly continued their work on synthetic lifeforms, and they took it to a new level. Instead of creating androids that are essentially machines shaped like humanoids, they came up with a new generation of Synths that, if their appearance is designed accordingly, are perfect replicas of humanoids. Dahj and Soji are beings of flesh and blood that are indistinguishable from humans, even in medical scans, and that are not aware of their artificial nature. There is one historical precedent, namely Juliana Tainer (TNG: "Inheritance") that remains unmentioned in the series (probably in order not to confuse casual viewers). However, there is still a difference. Juliana Tainer turned out an android on closer examination. Her body functions were imitated with electromechanical components, rather than really being organic. This does not seem to be the case with Dahj and Soji, two real human beings. They are repeatedly said to be descendants of Data in some fashion (as indicated by Data's painting named "Daughter" in "Remembrance" and by Soji's tilting of her head), but the concept of "cloning" an android "from a single neuron" never becomes clear, just as it remains mysterious why these androids would have to be created as twins. Ultimately Jean-Luc Picard himself becomes sort of an artificial lifeform when his brain patterns are transferred into a new body after his death in "Et in Arcadia Ego II". The golem that Soong created and that Jurati prepared for Picard is doesn't seem to be an android body but more like a clone - although a clone would have to grow in some "natural" way whereas the golem was definitely built. The result, on the other hand, is a body for Picard that is indistinguishable from the one he lost - minus the illness that is identified as the Irumodic Syndrome at the time.It has been a recurring topic not just in Star Trek: Picard but already since TNG that a "perfect" android would be one that looks, thinks, feels like a humanoid being. Everyone on the Enterprise was amazed by the perfection of Juliana Tainer's components in TNG: "Inheritance". Likewise, in PIC: "Broken Pieces", Jurati is absolutely fascinated that Soji has normal body functions such as eating, drinking or sleeping. On second thought, this fixation on making androids perfect copies of human beings is odd, however. Isn't an android that needs no sleep and that can be recharged on every wall socket instead of requiring a digestive system the more advanced one? Why create androids that are exactly like "the real thing" at all, unless humanity became sterile? Is this rather scientific/technological curiosity to explore the possibilities, or may it serve a real purpose?

Yet, it is possible that Soong's "androids of flesh and blood" and golems will become more widespread after the ban on synthetic life has been lifted. Perhaps the human and humanoid civilization will rather accept artificial lifeforms that are just like them, and most importantly without any superior abilities. Data blurred the difference between biological and artificial life, Dahj and Soji almost removed it, and Picard is both at once since "Et in Arcadia Ego II". There is no ethical rationale to impose any restrictions on synthetic lifeforms of this kind any longer, although the problem how to deal with anyone or anything less "advanced" and where to draw the line still remains.

Picard season 3

The third season of Picard adds a new chapter not only to the history of Data but also to the one of androids in general. In PIC: "The Bounty", Raffi, Worf and Riker find Daystrom Android M-5-10 on Daystrom Station, designed by Altan Soong to incorporate elements of Lal, B-4, Lore and Data. Well, the circumstances are rather odd. For one, Soong calls his creation a "golem", equating it with Picard's new body, although the next few episodes highlight the built-in projector and the superior computing capabilities and thereby firmly establish that this is an android and not an artificial replica of a human body. Also, Soong's mention of the "true human aesthetic of age" is more like an excuse to allow Brent Spiner to play this role without digital manipulation. If the intention had been to let the android experience age, Soong would have incorporated some "aging program", rather than giving him wrinkles and gray hair in the first place. Furthermore, the fact that in PIC: "Surrender" only Lore and Data are struggling for control in the mind of M-5-10 calls the statement into question that there is something of Lal and B-4 as well.Anyway, these weaknesses of the story aside, there is a new android that is supposed to have inherited the traits from previous Soong creations. This initially leads to some sort of multiple personality disorder in PIC: "Dominion", which is turned into a direct confrontation between the Data and the Lore portion as Geordi removes the partition between them in PIC: "Surrender". As Data gradually hands over all his memories to Lore and becomes weaker in the process, something remarkable happens. Lore inherits everything from Data that he rates as worthless trinkets, but he doesn't notice that he himself thereby becomes Data: "You took the things that were me, and in doing so... you have become me." So did the memories stored in his positronic brain really make up everything that was Data? Since he was an android, the answer is probably yes. But if this is so, how could Lore become Data? It is possible that the other android had far fewer or far weaker experiences that defined his personality. His being "evil" or "unstable" was never something that he acquired but was engrained in his original programming, as we learned as soon as in TNG: "Datalore". It looks like the wealth of information transferred from Data to his largely empty memory could "heal" this condition. This also means that Lore may have had the potential to change by himself if given enough time and opportunities. On the other hand, we don't really know to which extent Data and Lore were really present in a single body and mind. There is a lot room for interpretation, also regarding the visualization. It looks like the Data part "dies", then reappears and takes over Lore, upon which Lore "dies". This doesn't look like the two merge and doesn't comply with what they are saying.

Is the new android, who calls himself Data and who has entered a new level of sentience, the same person as Data? Technically, Data died in "Star Trek Nemesis", his active memory record died in PIC: "Et in Arcadia Ego II", and he arguably died a third time in PIC: "Surrender". If a human being went through similar iterations, we would argue that the consciousness or the soul would be lost each time only the memory was preserved. The transfer of memories into a new body and brain would create just a copy that still thinks to be the very same person. While the transporter can be considered safe because there is evidence from several episodes of people staying conscious while caught in the transporter beam, the question was posed whether the Picard golem still is Picard. A definite answer is not really possible. In the case of Data, we may argue that he may not have a soul in a metaphysical sense. As already mentioned, everything that made up Data was his memory, and that memory could be stored and copied indefinitely as it seems. Then again, what is the difference between the android and the somewhat different type of machine that is a human being? Is consciousness an illusion? There are reasons to believe that whatever is true for biological lifeforms also applies to androids.

Lower Decks

There are three recurring evil artificial intelligences in Lower Decks: the hologram Badgey, the exocomp Peanut Hamper and the computer AGIMUS. Additionally, there is a "megalomaniacal computer storage" at the Daystrom Institute with many more mad computers, which are subjected to various therapies, as seen in LOW: "A Few More Badgeys". Moreover, an experiment to develop a self-aware starship class goes horribly wrong in LOW: "The Stars at Night". Although the theme of AIs wanting to destroy (Badgey, Texas class) or enslave (AGIMUS, Peanut Hamper) organic lifeforms is primarily played for laughs in the series, it fits with the tone especially of classic Star Trek where intelligent machines were rated as dangerous. The fact that AIs with mental health problems receive a therapy and are not simply switched off and dismantled, on the other hand, is a sign that they may be acknowledged as sentient beings.

There are three recurring evil artificial intelligences in Lower Decks: the hologram Badgey, the exocomp Peanut Hamper and the computer AGIMUS. Additionally, there is a "megalomaniacal computer storage" at the Daystrom Institute with many more mad computers, which are subjected to various therapies, as seen in LOW: "A Few More Badgeys". Moreover, an experiment to develop a self-aware starship class goes horribly wrong in LOW: "The Stars at Night". Although the theme of AIs wanting to destroy (Badgey, Texas class) or enslave (AGIMUS, Peanut Hamper) organic lifeforms is primarily played for laughs in the series, it fits with the tone especially of classic Star Trek where intelligent machines were rated as dangerous. The fact that AIs with mental health problems receive a therapy and are not simply switched off and dismantled, on the other hand, is a sign that they may be acknowledged as sentient beings.

Conclusion

Androids, computers and other intelligent machines were predominantly seen as pieces technology in the 1960s and not so much as possible sentient beings. If they exhibited characteristics of human beings in the era of TOS, it almost customarily endangered the human crew, which became a cliché of TOS. But their possibly villainous behavior was not condemned because the androids or other machines acted just within the boundaries of their programming.

This changed with Data. Since the remarkable episode "The Measure of a Man" there is an ongoing trend to recognize the rights not only of androids but also of other artificial lifeforms such as holograms. While technology runs out of control just as frequently as in TOS, in the 24th century the question whether androids and machines can surpass their original programming is in the focus of interest. Overall, the 24th century seems to be somewhat more open-minded about artificial lifeforms. This may be due to the development in the real-world, in which today's computers are far more advanced than anyone could have imagined in the 1960s. The question whether computers may become sentient has become a more interesting science fiction topic than it was at the time of TOS.

The development in Star Trek: Picard is interesting because it goes into two extreme directions. After a complete ban on androids, triggered by the attack on Mars and motivated by fear, it shows how the differences between biological and artificial life are ultimately blurred and become meaningless, at least as very "advanced" androids are concerned that are exact replicas of human bodies. The resurrection of Data in the third season of Star Trek: Picard may seem like an unwarranted plot twist, but the way that this Data enters a new plane of existence is both in line with other recent developments and a logical next step in the android's personal evolution.

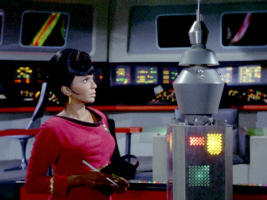

Andrea and Ruk in TOS: "What are Little Girls Made of?"

Andrea and Ruk in TOS: "What are Little Girls Made of?"